Description

Handling ridiculous amounts of data with probabilistic data structures

Presented by C. Titus Brown

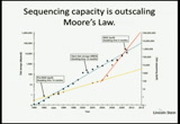

Part of my job as a scientist involves playing with rather large amounts of data (200 gb+). In doing so we stumbled across some neat CS techniques that scale well, and are easy to understand and trivial to implement. These techniques allow us to make some or many types of data analysis map-reducable. I'll talk about interesting implementation details, fun science, and neat computer science.

Abstract

If an extreme talk, I will talk about interesting details/issues in:

- Python as the backbone for a non-SciPy scientific software package: using Python as a frontend to C++ code, esp for parallelization and testing purposes.

- Implementing probabilistic data structures with one-sided error as pre-filters for data retrieval and analysis, in ways that are generally useful.

- Efficiently breaking down certain types of sparse graph problems using these probabilistic data structures, so that large graphs can be analyzed straightforwardly. This will be applied to plagiarism detection and/or duplicate code detection.